Why I Built Git-Based Version Control for n8n

I lost a workflow once. Not a simple test workflow—a production automation that handled customer data processing. I had made what seemed like a small change, tested it briefly, and saved. Within an hour, I realized the change broke a critical edge case. And I had no way to see what the workflow looked like before.

That moment forced me to face something uncomfortable: I was running business-critical automation without version control. I wouldn’t write code without Git, but somehow I’d convinced myself that n8n workflows were different. They weren’t.

The problem got worse when I started collaborating. A team member would update a shared workflow, and I’d have no idea what changed. We’d accidentally overwrite each other’s work. When something broke, we’d waste time trying to figure out who changed what.

I needed proper version control, but n8n’s native source control wasn’t an option—I run the Community Edition, and that feature requires Enterprise. So I built my own system using n8n’s API and GitHub nodes.

My Setup: Automated Backups with Git Hooks

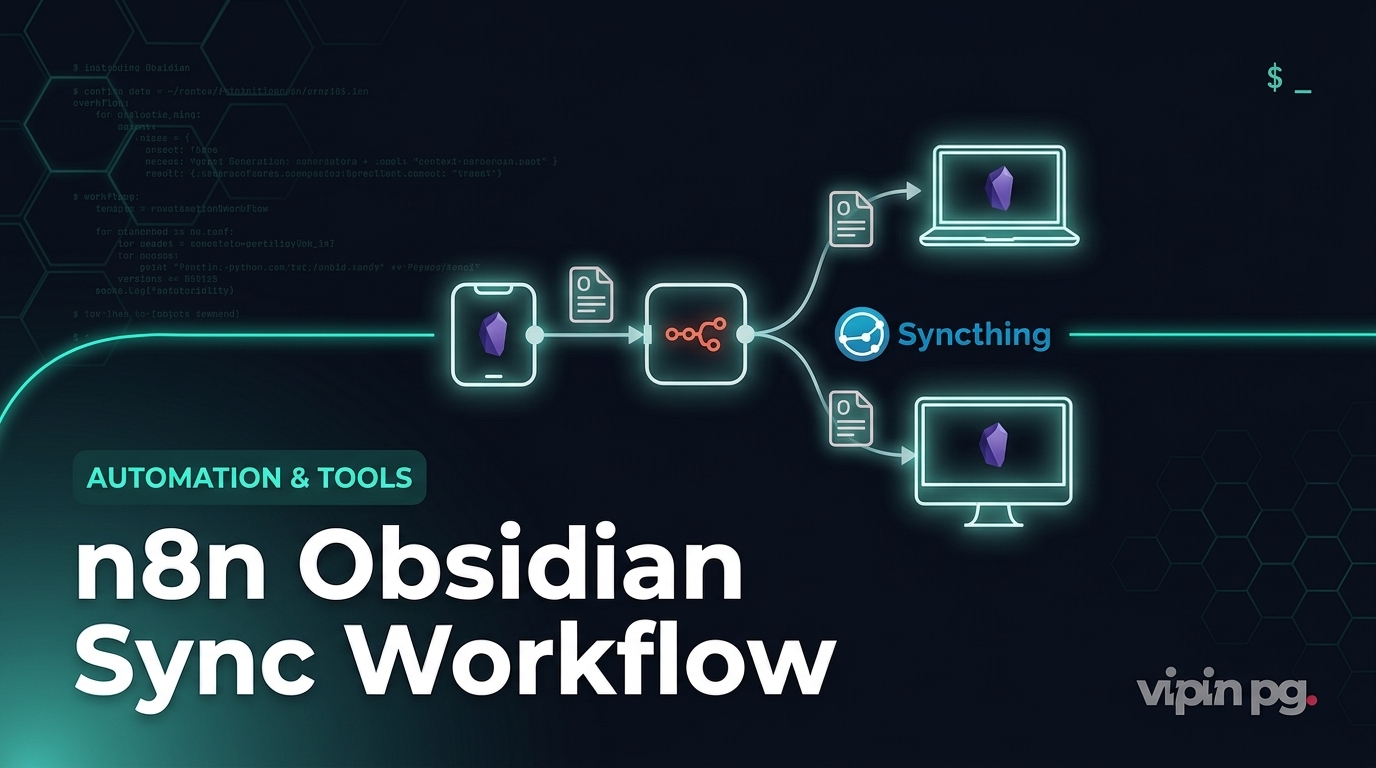

My solution has two parts: scheduled backups that run automatically, and webhook-triggered backups that capture changes immediately when I save a workflow.

The Backup Workflow

Every night at 2 AM, a workflow runs that:

- Fetches all workflows from my n8n instance via the API

- Loops through each workflow

- Checks if the workflow already exists in my GitHub repository

- Either creates a new file or updates the existing one

- Commits with a message that includes the workflow name and timestamp

This gives me a daily snapshot of everything. If something goes wrong during the day, I can at least roll back to last night’s version.

Immediate Backup on Save

The daily backup wasn’t enough. I wanted every save captured immediately, like Git does for code. So I built a second workflow triggered by n8n’s workflow update events.

When I save any workflow, n8n fires a webhook. My backup workflow catches it, extracts the workflow ID, fetches the complete workflow data, and pushes it to GitHub. The commit message includes what changed and when.

This creates a real-time backup. Every version of every workflow is preserved the moment I save it.

The GitHub Repository Structure

My backup repository is organized like this:

n8n-workflows/ ├── workflows/ │ ├── customer-data-sync.json │ ├── email-automation.json │ ├── backup-scheduler.json │ └── ... ├── credentials/ │ └── credential-stubs.json └── README.md

Each workflow is a separate JSON file named after the workflow (with special characters replaced by underscores). This makes it easy to browse in GitHub and see what changed in each commit.

I don’t store actual credentials—only references. The credential values stay in n8n’s encrypted database.

What Worked

Using n8n’s API for Export

The n8n node in n8n can access your own instance’s API. This was surprisingly simple to set up. I created an API key in my n8n settings, added it as a credential, and the n8n node could fetch all my workflows.

The API returns complete workflow definitions as JSON, including all nodes, connections, and settings. I didn’t need to parse or transform anything—just save the JSON directly to GitHub.

GitHub’s File API

GitHub’s API has separate operations for creating and updating files. You can’t just “save”—you need to check if the file exists first, then choose create or update accordingly.

I use the “Get File” operation with error handling. If it succeeds, the file exists and I get back its SHA (GitHub’s version identifier). If it fails, the file doesn’t exist. Then I route to either “Create File” or “Edit File” based on that result.

For updates, you must provide the current SHA. This prevents race conditions where two processes try to update the same file simultaneously. GitHub rejects the second update, which is exactly what you want.

Sanitizing Workflow Names

Workflow names can contain spaces, special characters, and symbols that don’t work well in filenames. I use a simple regex to replace anything that’s not alphanumeric, dash, or underscore:

{{ $json.name.replace(/[^a-zA-Z0-9-_]/g, '_') }}

This turns “Customer Data: Sync & Process” into “Customer_Data__Sync___Process.json”. Not beautiful, but consistent and safe.

Commit Messages That Matter

My commit messages follow a pattern:

- For new workflows: “Add: [workflow name]”

- For updates: “Update: [workflow name] – [timestamp]”

This makes the Git history readable. I can scan commits and immediately see which workflow changed and when.

Error Handling

I set the GitHub “Check File Exists” node to continue on error. If the file doesn’t exist, it errors, but the workflow continues and routes to the “Create” path. Without this setting, the whole workflow would fail on the first new workflow.

For actual failures (API rate limits, network issues, authentication problems), I have a separate error workflow that sends me a notification. I don’t want silent failures.

What Didn’t Work

Trying to Backup on Every Execution

My first version tried to backup workflows after every execution, not just saves. Bad idea. Some of my workflows run every few minutes. That created hundreds of commits per day, all identical.

GitHub’s API has rate limits. I hit them within hours. Even after fixing the rate limit issue, the repository became unusable—thousands of commits with no meaningful changes.

I switched to backup-on-save only. Much cleaner.

Storing Everything in One Commit

I initially tried to commit all workflows in a single operation—fetch everything, build one big commit with multiple files. This seemed more efficient than one commit per workflow.

It was a nightmare to debug. When something failed, I couldn’t tell which workflow caused the problem. And GitHub’s diff view was useless when 50 files changed in one commit.

Separate commits per workflow is slower but far more maintainable. I can see exactly what changed in each workflow.

Not Handling Workflow Deletions

My backup workflow creates and updates files, but it doesn’t delete them. If I delete a workflow in n8n, the file stays in GitHub.

I thought about adding deletion logic, but decided against it. If I accidentally delete a workflow, I want the backup to survive. I can manually delete the GitHub file if I really want it gone.

This means my GitHub repository accumulates old workflows. I’m okay with that—storage is cheap, and having extra backups has saved me twice.

Credential Handling

Credentials are tricky. The n8n API returns credential references in workflows, but not the actual values (for obvious security reasons). So my backups contain credential IDs and names, but not passwords or API keys.

This means I can’t fully restore a workflow from GitHub alone—I need the credentials to exist in the target n8n instance. For disaster recovery, I maintain a separate encrypted backup of credential values using a different system.

I tried exporting credential schemas (the structure without values), but n8n’s API doesn’t support that well. I ended up documenting required credentials manually in the repository README.

Rollback on Execution Failures

Having backups is one thing. Using them when something breaks is another.

Manual Rollback Process

When a workflow fails and I need to revert, my process is:

- Find the last working version in GitHub’s commit history

- Copy the JSON from that commit

- Open the workflow in n8n

- Paste the JSON in the workflow’s code view

- Save and test

This works, but it’s manual and slow. In a production incident, I’m fumbling through GitHub commits while systems are broken.

Automated Rollback Attempt

I tried building an automated rollback workflow. The idea: when a workflow execution fails, automatically fetch the previous version from GitHub and restore it.

This failed for several reasons:

Detection is hard. How do you distinguish between a workflow failing because of bad input versus failing because the workflow itself is broken? I couldn’t reliably detect “this workflow is now broken” versus “this execution had bad data.”

Race conditions. If a workflow is running multiple executions simultaneously, automatic rollback could revert while other executions are still using the new version. This created inconsistent behavior.

Cascading failures. If the rollback workflow itself has a bug, you can’t roll it back automatically. I needed a way to escape the automation when things went really wrong.

I abandoned automatic rollback. Manual rollback with good monitoring turned out to be more reliable.

What I Use Instead

I have a monitoring workflow that tracks execution failures. When a workflow fails repeatedly (three times in ten minutes), it sends me an alert with:

- The workflow name and ID

- Error messages from failed executions

- A direct link to the GitHub commit history for that workflow

- The timestamp of the last successful execution

This gives me everything I need to manually roll back quickly. I can see when it last worked, review what changed since then, and restore the working version.

Not as elegant as automatic rollback, but it actually works in practice.

Key Takeaways

Daily backups aren’t enough. If you only backup once per day, you can lose a full day’s work. Backup on save or at least every few hours.

One commit per workflow is cleaner than bulk commits. It’s slower, but the Git history is actually useful. You can see what changed in each workflow without wading through dozens of files.

Don’t try to backup everything. Credentials can’t be safely backed up to Git. Document what credentials are needed instead.

Automatic rollback is harder than it sounds. Detecting when a workflow is truly broken (versus just processing bad data) is difficult. Manual rollback with good monitoring is more reliable.

GitHub’s API has rate limits. If you’re backing up frequently or have many workflows, you’ll hit them. Batch operations where possible, and don’t backup on every execution.

Sanitize filenames. Workflow names can contain characters that break file systems. Replace them with something safe.

Error handling is critical. Your backup workflow will encounter errors—missing files, API failures, network issues. Handle them gracefully or you’ll have silent failures.

The system I built isn’t perfect, but it’s saved me multiple times. I can confidently make changes knowing I can always roll back. I can collaborate without fear of overwriting someone else’s work. And when disaster strikes, I have a complete history to restore from.

That peace of mind is worth the time it took to build.