Why I Built Connection Pooling Alerts for PgBouncer

I run PostgreSQL behind PgBouncer in my self-hosted stack. For months, everything worked fine under normal load. Then one day, a batch job I wrote started hammering the database with thousands of short-lived connections. The app didn’t crash, but response times spiked, and I had no clear signal that PgBouncer’s connection queue was filling up.

I needed alerts—not just dashboards I might check once a week. I wanted Prometheus to tell me when connection pooling was under stress, before users noticed slowdowns.

My Actual Setup

I run this on a Proxmox VM with Docker containers:

- PostgreSQL 15 running directly on the VM (not containerized, for simpler backup management)

- PgBouncer in transaction pooling mode, listening on port 6432

- Prometheus and Grafana in Docker Compose

- pgbouncer_exporter to expose PgBouncer metrics to Prometheus

I chose transaction pooling because my workloads are mostly short API calls and background jobs. Session pooling would have tied up connections unnecessarily. Statement pooling was too aggressive for my use case and required changes in application logic I didn’t want to make.

The Problem with Default Monitoring

PgBouncer has a built-in SHOW STATS command, but it’s not useful for alerting. You have to manually connect and query it. Grafana dashboards are great for exploration, but they don’t wake you up at 2 AM when something’s wrong.

I needed:

- Metrics exposed in a format Prometheus could scrape

- Alert rules that fired when connection queues grew too long

- A way to know if clients were waiting for available connections

Setting Up pgbouncer_exporter

I used the official prometheus-community/pgbouncer_exporter. Here’s my Docker Compose snippet:

pgbouncer-exporter:

image: prometheuscommunity/pgbouncer-exporter:latest

environment:

PGBOUNCER_HOST: 192.168.1.50

PGBOUNCER_PORT: 6432

PGBOUNCER_USER: pgbouncer_stats

PGBOUNCER_PASS: my_stats_password

ports:

- "9127:9127"

restart: unless-stopped

I created a dedicated pgbouncer_stats user in PgBouncer’s userlist.txt with read-only access to stats. This user doesn’t need database privileges—it only queries PgBouncer’s internal stats database.

One mistake I made initially: I tried using MD5 authentication without properly hashing the password in userlist.txt. PgBouncer rejected connections until I generated the hash correctly:

echo -n "my_stats_passwordpgbouncer_stats" | md5sum

Then I added it to userlist.txt like this:

"pgbouncer_stats" "md5<hash_output>"

Configuring Prometheus to Scrape PgBouncer Metrics

In my prometheus.yml, I added a scrape job:

scrape_configs:

- job_name: 'pgbouncer'

static_configs:

- targets: ['192.168.1.50:9127']

After restarting Prometheus, I checked the targets page (http://prometheus:9090/targets) and confirmed the exporter was being scraped successfully.

Key Metrics I Actually Use

The exporter exposes dozens of metrics, but I only alert on a few:

pgbouncer_pools_server_active_connections— Active server connections to Postgrespgbouncer_pools_server_idle_connections— Idle connections in the poolpgbouncer_pools_client_waiting_connections— Clients waiting for a connectionpgbouncer_stats_queries_duration_seconds— Query latency through PgBouncer

The most critical one is client_waiting_connections. If this number stays above zero for more than a minute, it means PgBouncer can’t keep up with demand.

Writing Alert Rules

I created a pgbouncer_alerts.yml file and mounted it into Prometheus:

groups:

- name: pgbouncer

interval: 30s

rules:

- alert: PgBouncerClientsWaiting

expr: pgbouncer_pools_client_waiting_connections > 0

for: 1m

labels:

severity: warning

annotations:

summary: "PgBouncer has clients waiting for connections"

description: "{{ $value }} clients are waiting for available connections in pool {{ $labels.database }}"

- alert: PgBouncerPoolExhausted

expr: pgbouncer_pools_server_active_connections / (pgbouncer_pools_server_active_connections + pgbouncer_pools_server_idle_connections) > 0.9

for: 2m

labels:

severity: critical

annotations:

summary: "PgBouncer connection pool is nearly exhausted"

description: "Pool utilization is {{ $value | humanizePercentage }} for database {{ $labels.database }}"

The first alert fires if any clients are stuck waiting. The second fires if the pool is over 90% utilized for more than two minutes.

I tested these by running a script that opened 100 connections simultaneously. The alert triggered as expected.

What Didn’t Work

Initially, I tried alerting on pgbouncer_stats_total_query_time, thinking it would catch slow queries. It didn’t. That metric is cumulative, not a rate, so the alert never fired correctly. I had to switch to rate(pgbouncer_stats_total_query_time[1m]) to make it useful.

I also tried setting pool_mode = statement to maximize connection reuse. It broke my application immediately because some queries depended on temporary tables that don’t survive statement-level pooling. I reverted to transaction pooling.

Integrating Alerts with Notifications

I send alerts to a Slack channel using Prometheus Alertmanager. My alertmanager.yml looks like this:

route:

receiver: 'slack'

group_by: ['alertname', 'database']

group_wait: 10s

group_interval: 5m

repeat_interval: 4h

receivers:

- name: 'slack'

slack_configs:

- api_url: 'https://hooks.slack.com/services/YOUR/WEBHOOK/URL'

channel: '#alerts'

text: '{{ range .Alerts }}{{ .Annotations.summary }}

{{ .Annotations.description }}

{{ end }}'

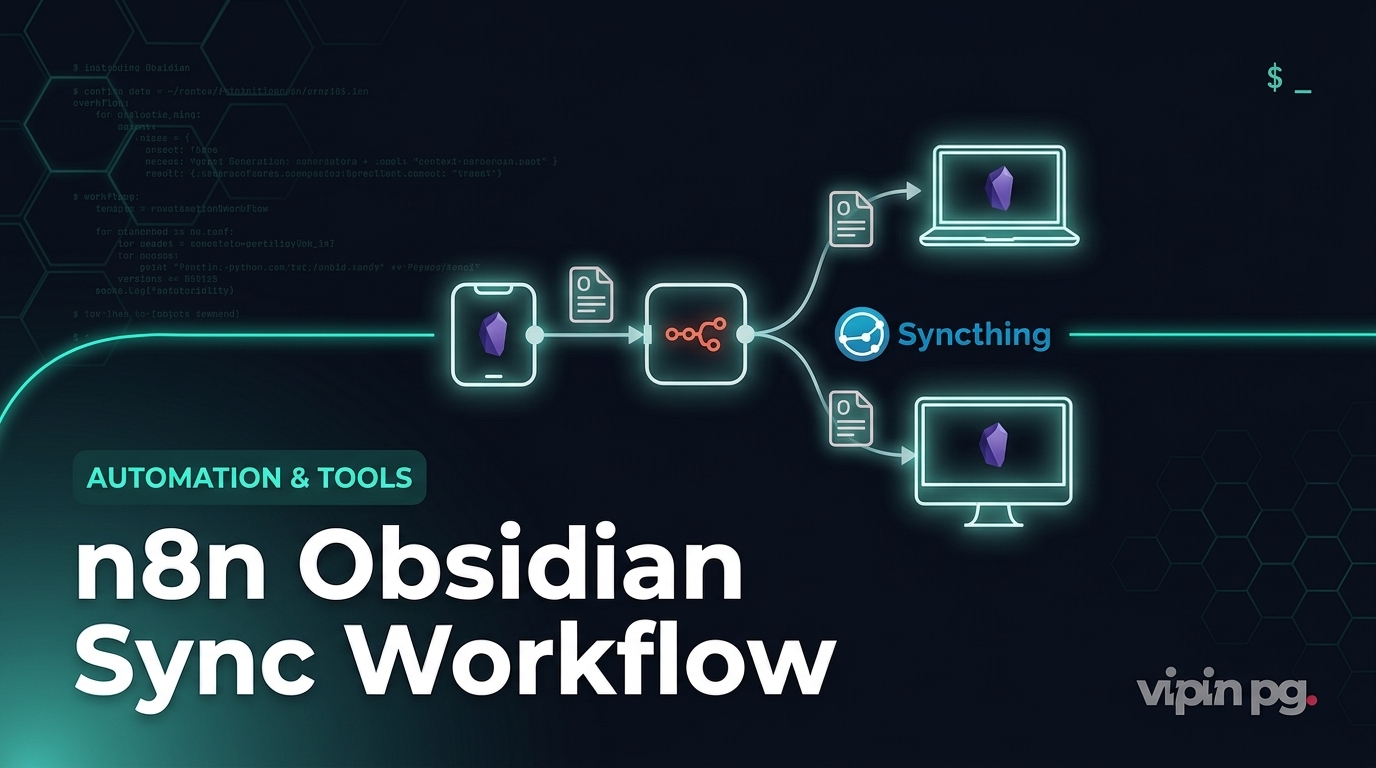

I also experimented with sending alerts to n8n for more complex workflows, but Slack was simpler and more reliable for this use case.

Lessons from Running This in Production

After a few months, here’s what I learned:

- Pool size matters more than I thought. I started with

default_pool_size = 20andmax_client_conn = 100. Under load, clients would queue up. I bumpeddefault_pool_sizeto 40, and the problem disappeared. - Transaction pooling has limits. If a client holds a connection open without starting a transaction (like during an HTTP request with multiple queries), PgBouncer can’t reuse it. I had to audit my code to ensure transactions were explicit.

- Alerts need tuning. My first version of the “clients waiting” alert fired constantly during normal traffic spikes. I increased the

forduration from 30 seconds to 1 minute, which reduced noise. - Grafana dashboards are still essential. Alerts tell me something is wrong, but I need Grafana to understand why. I imported dashboard 14022 from Grafana.com, which shows pool utilization over time.

Why I Didn’t Use Cloud Monitoring

I could have used AWS RDS with built-in connection pooling and CloudWatch alerts. But I self-host for a reason: I want full control, no vendor lock-in, and no monthly bills that scale with usage. Running Prometheus and PgBouncer costs me nothing beyond the electricity for my Proxmox server.

Key Takeaways

- PgBouncer’s built-in stats are not enough. You need an exporter to make them useful.

- Alert on

client_waiting_connectionsfirst. It’s the clearest signal that your pool is undersized. - Transaction pooling works well for most web apps, but you need to be deliberate about transaction boundaries in your code.

- Test your alerts by simulating load. Don’t wait for a real incident to find out they don’t work.

- Dashboards and alerts serve different purposes. You need both.

This setup has saved me from at least two incidents where connection exhaustion would have caused downtime. It’s not glamorous, but it works.